Query Fan-Out: Google AI Mode's Hidden Ranking Factor

Raman Singh

Raman Singh is a highly skilled marketing professional who serves as the head of marketing at Copyrocket AI

Most SEOs are still optimizing for one query, one page, one ranking position. But behind every search submitted to Google AI Mode, the system quietly generates 8 to 12 additional searches you never typed — and your content either shows up in those hidden searches or it doesn't. Ekamoira's original study of 173,902 URLs found that 88% of brands miss AI citation opportunities entirely because they only optimize for the surface-level keyword. This guide decodes every layer of query fan-out — how it works mechanically, what Google's own patent reveals, and exactly how to structure your content to earn citations across the full fan-out surface.

Key Takeaways

Query fan-out is the process where Google AI Mode decomposes a single user query into 8–12 related sub-queries, executes them in parallel, and synthesizes a unified answer — meaning your content competes not for one search but for a cluster of related searches simultaneously.

Pages that rank across fan-out sub-queries are 161% more likely to earn AI citations than pages that only rank for the head keyword. Covering the full topic cluster is the primary lever for AI visibility.

Google's query fan-out patent (US11663201B2) describes signals tied to topical breadth, entity relationships, internal linking depth, source diversity, and content freshness — not just keyword relevance.

Fan-out sub-queries are not stable. Ekamoira's research found only 27% query stability, meaning 73% of sub-queries change with each search — which is why static keyword optimization fails and why topical depth with 80%+ topic coverage is the only durable strategy.

Websites covering 70% or more of the related query ecosystem for a topic earn citations in 4.3x more AI-generated responses than sites with narrower coverage, according to research cited across Conductor and Ekamoira.

95% of fan-out sub-queries show zero monthly search volume in traditional keyword tools — meaning they are completely invisible to standard SEO research but are the primary gatekeepers of AI generative visibility.

What Is Query Fan-Out?

Query fan-out is the mechanism by which AI search systems transform one user question into many.

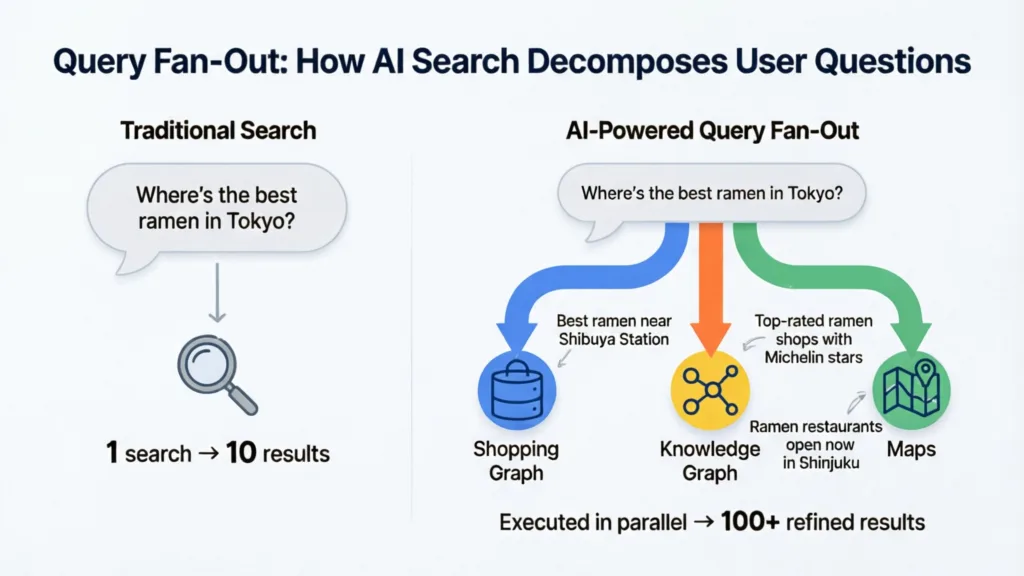

When a user types a query into Google AI Mode, the system does not run a single search and return a list of ranked links.

Instead, the AI — powered by Google's custom Gemini models — deconstructs the query into its core components, generates a set of related sub-queries, executes them simultaneously across Google's search index and specialized databases (Shopping Graph, Knowledge Graph, Finance, Maps, and more), then synthesizes the results into one coherent answer.

Google VP of Product for Search Robby Stein described this process at Google I/O 2025: "If you're asking a question like things to do in Nashville with a group, it may think of a bunch of questions like great restaurants, great bars, things to do if you have kids, and it'll start Googling basically."

That phrase — "it'll start Googling basically" — is the plainest summary of what fan-out means in practice. Your single query spawns a parallel research session Google runs on your behalf, invisibly, in under a second.

Search Engine Journal's reporting on the Google I/O announcement confirmed that AI Mode typically generates 8–12 sub-queries per standard search, with Google's Deep Search feature capable of issuing hundreds of sub-queries for complex research tasks — taking several minutes to complete.

How Query Fan-Out Differs from Traditional Search

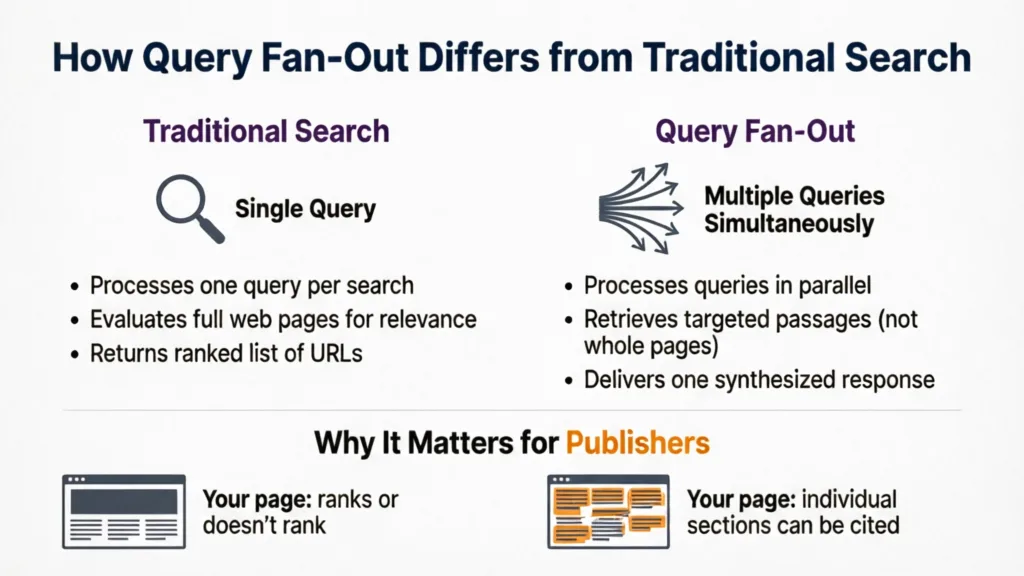

Traditional Google processes one query per search, evaluates full web pages for relevance, and returns a ranked list. Query fan-out processes multiple queries simultaneously, retrieves targeted passages from pages rather than ranking whole URLs, and delivers one synthesized response.

The distinction matters for publishers: in traditional search, your page either ranks or it doesn't. In query fan-out, individual sections of your page can be cited independently across multiple sub-queries.

A single well-structured article about running shoes could be cited across sub-queries for cushioning recommendations, trail versus road comparisons, budget options, and injury prevention — all from one AI Mode response triggered by "best running shoes for beginners."

Real-world example — Lululemon: Similarweb's AI Brand Visibility analysis revealed that Lululemon had strong AI citation presence for leggings and yoga content but near-zero visibility for running gear and sustainable activewear — two categories where they sell products. Competitors Athleta and Alo Yoga captured the AI citations for those sub-queries. Lululemon was physically absent from the AI responses users received for those searches, not because of a ranking problem, but because their content hadn't addressed the fan-out sub-queries for those specific intent clusters.

How Query Fan-Out Works: The Three-Layer Decomposition Model

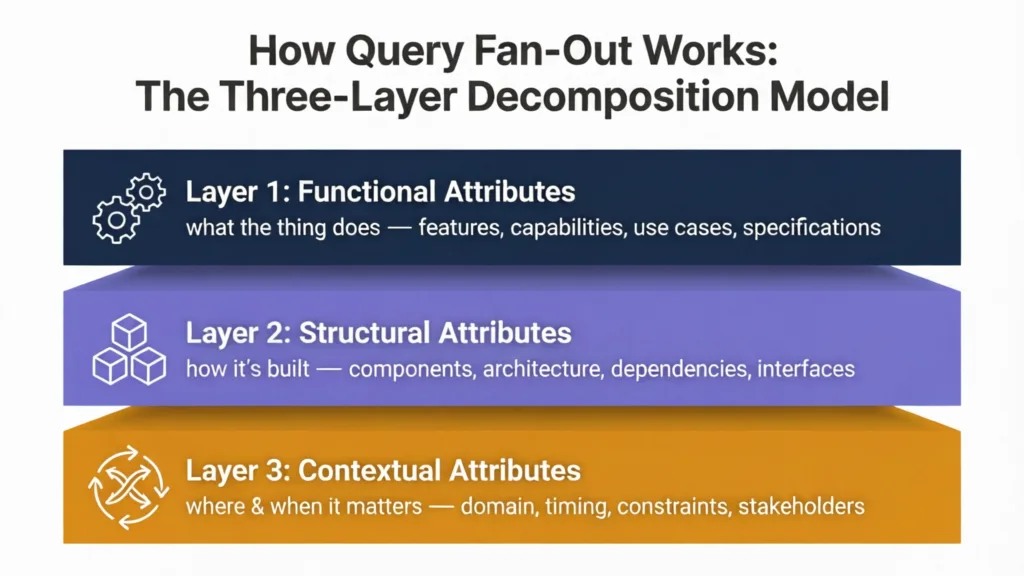

Understanding fan-out at a mechanical level is what separates tactical adjustments from a genuine strategy shift. The AI doesn't randomly generate sub-queries — it decomposes the original query through three distinct analytical layers.

Layer 1: Functional Attributes

The first decomposition layer targets what the thing does — features, capabilities, use cases, and specifications. If a user searches "moisturizers for dry skin," functional sub-queries include ingredient identification, product type comparisons (cream vs. gel vs. oil), and compatibility questions with other skincare products like retinol.

Layer 2: Intent Facets

The second layer targets why the user is asking — cost considerations, durability comparisons, purchase intent signals, and competitive alternatives. For the same moisturizer query, intent facets generate sub-queries around price points, brand comparisons, dermatologist recommendations, and "best value" filters.

Layer 3: Personal Context

The third layer adds user-specific context — experience level, location, device, search history signals, and stated constraints. This layer generates sub-queries like "moisturizer for sensitive skin," "fragrance-free options," or "moisturizers available at [local pharmacy chain]." WordLift's research on stochastic query decomposition notes that this personal context layer is the least predictable, because the sub-queries it generates vary based on individual user signals that content creators cannot see or target directly.

The practical implication is that you can reliably predict and optimize for Layers 1 and 2 through structured content planning. Layer 3 requires breadth — covering enough angles that some of your content naturally matches the personalized sub-queries different users trigger.

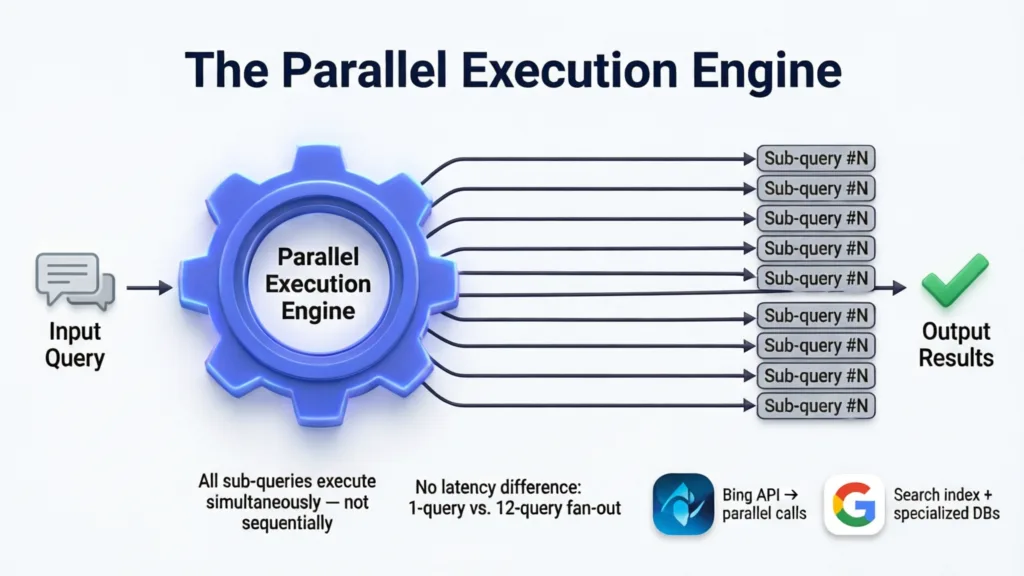

The Parallel Execution Engine

All sub-queries execute simultaneously — not sequentially. Research from Ekamoira's study confirmed that Google's infrastructure handles this parallel execution at query speed — the user experiences no latency difference between a traditional single-keyword search and a 12-query fan-out search.

For ChatGPT, parallel execution hits Bing's API simultaneously across multiple sub-queries. For Google AI Mode, it draws from the Search index plus specialized databases for shopping, local, news, and structured knowledge.

Tip: The reason this parallel execution matters for your content strategy is time-to-answer. Each sub-query is resolved independently and simultaneously — which means a page that answers one sub-query slowly and completely competes on equal footing against a page that answers it quickly and shallowly. Depth wins because the AI is looking for the best answer per sub-query, not the fastest.

The Query Fan-Out Statistics Every SEO Needs to Know

The data behind fan-out's impact on search visibility is extensive enough that generic SEO advice no longer applies to the information queries where AI Mode is active.

The 88% Visibility Gap

Ekamoira's analysis of 173,902 URLs across 10,000 keywords found that 88% of brands miss AI citation opportunities because they optimize only for the head-term keyword, not for the full fan-out query cluster. This is the single most important statistic in query fan-out research: nearly 9 in 10 brands are invisible to AI Mode for the majority of searches their target customers submit — not because of a ranking problem, but because of a coverage problem.

The same study found only 32% overlap between traditional organic rankings and AI citations. A brand can hold the #1 organic ranking for a keyword and still fail to appear in AI Mode responses for that same search, simply because the AI's sub-queries surface different pages from different domains.

The 161% Citation Lift

Pages that rank across fan-out sub-queries are 161% more likely to earn AI Overview citations than pages optimized only for the head keyword. This finding from Ekamoira's research establishes a direct, quantifiable relationship between topic coverage breadth and AI citation frequency. The mechanism is straightforward: if the AI generates 10 sub-queries and your content addresses 8 of them, you are eligible for up to 8 citation slots in one AI Mode response. A competitor with narrower content is eligible for 2.

The 73% Instability Problem

Here is the query fan-out statistic most SEO articles ignore entirely: Ekamoira's research found that only 27% of fan-out sub-queries are stable — meaning 73% change with each search, even for the same head keyword. Two users searching the identical phrase in AI Mode will trigger different sub-query sets based on their context, history, and the AI's stochastic decomposition logic.

This instability is why targeting specific long-tail sub-queries as if they were traditional keywords fails. You cannot predict exactly which sub-queries the AI will generate — you can only ensure your content is broad and deep enough that whatever sub-queries it generates, some of your pages are relevant.

The Fan-Out Decay Curve from Ekamoira's model shows that content covering 80% or more of a topic retains 85.4% AI visibility despite the 73% instability — because broad topical coverage acts as a statistical buffer against unpredictable decomposition.

The Zero-Search-Volume Sub-Query Problem

Research compiled across multiple studies shows that 95% of fan-out sub-queries show zero monthly search volume in traditional keyword tools like Ahrefs, Semrush, or Google Keyword Planner. This creates a critical blind spot: SEOs using standard keyword research to decide what content to create are systematically ignoring 95% of the query space that AI Mode actually searches across.

The implication is counterintuitive but clear. Creating content for sub-queries that show zero keyword volume is now more important for AI visibility than targeting high-volume keywords — because those zero-volume sub-queries are precisely what the AI generates to fill out its synthesized answer.

Query Fan-Out Across Industries: What the Data Shows

Fan-out doesn't operate the same way across all industries. The scale of decomposition — how many sub-queries a single query generates — varies significantly by vertical, and so does the citation opportunity.

Healthcare

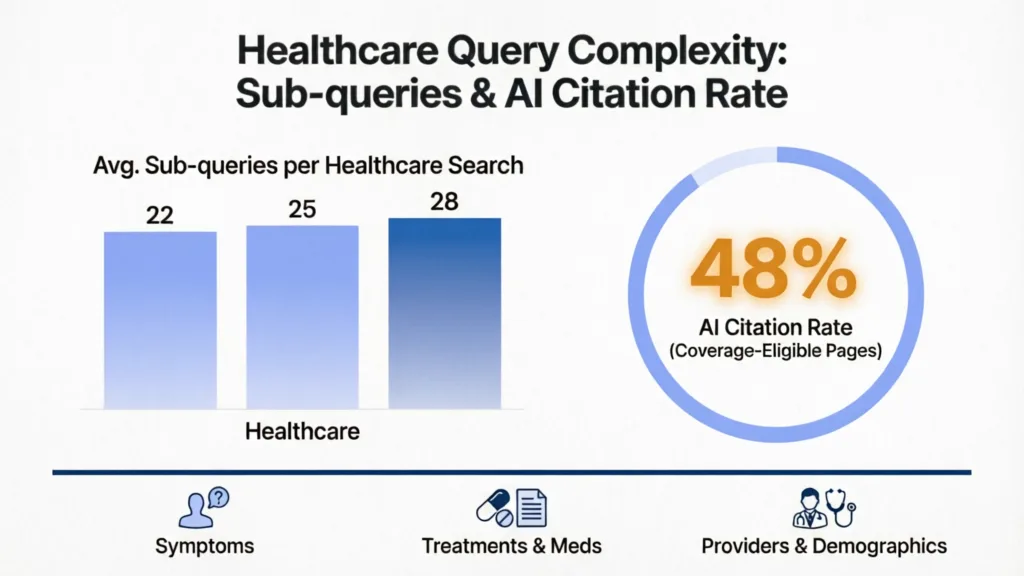

Ekamoira's industry-specific data found that healthcare queries generate 22–28 sub-queries per search — the highest of any vertical analyzed — with a 48% AI citation rate for pages meeting coverage thresholds.

Healthcare queries fan out extensively because they involve symptoms, treatments, medications, contraindications, demographics, and provider types as distinct sub-query branches.

Real-life scenario: A health system publishing content about "knee pain treatment" cannot cover the topic with one article. The AI will fan out into sub-queries for physical therapy approaches, surgical options, home remedies, age-specific advice, post-surgical recovery, and insurance coverage questions. Each of those is a separate sub-query branch requiring its own dedicated section or page to be citable.

E-Commerce

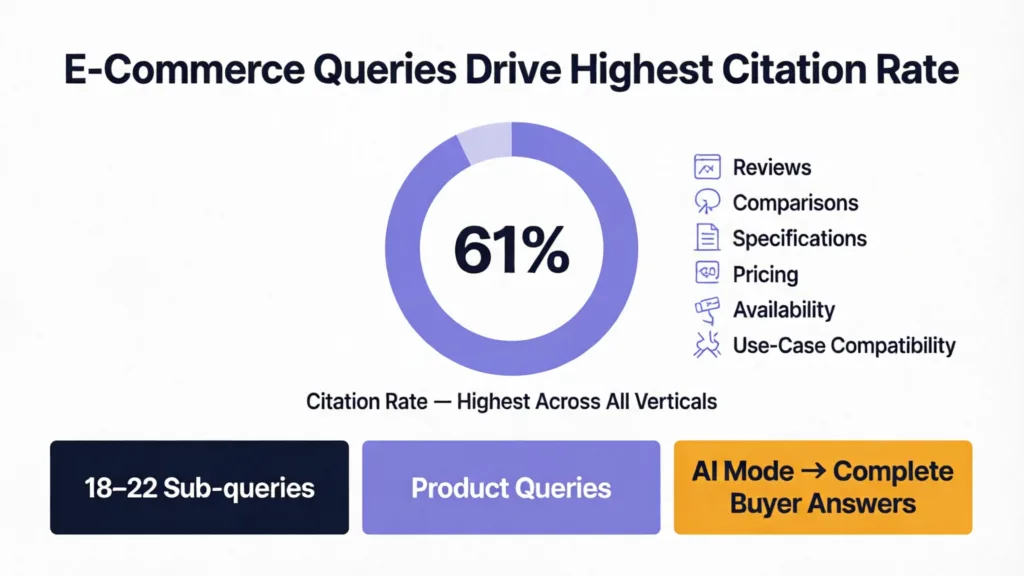

E-commerce queries generate 18–22 sub-queries and produce a 61% citation rate — the highest citation rate of any vertical. This is because product queries naturally decompose into reviews, comparisons, specifications, pricing, availability, and use-case compatibility — all areas where AI Mode seeks to give buyers a complete answer.

Real-world example: Similarweb's analysis of a protein powder brand found fan-out sub-queries covering ingredient safety, comparison with competitor brands, specific use cases (marathon training, weight loss, muscle gain), and flavor reviews. Brands whose content only addressed the primary product category — "best protein powder" — were consistently outcompeted by smaller brands that had created targeted content for each fan-out branch.

B2B and Finance

Finance queries generate 16–20 sub-queries with a 52% citation rate. KEO Marketing's B2B analysis found that enterprise B2B subjects require addressing an average of 40–60 related queries to achieve meaningful AI citation presence — a significantly larger content investment than most B2B marketing teams currently make.

What Google's Patent Reveals About Fan-Out Ranking Signals

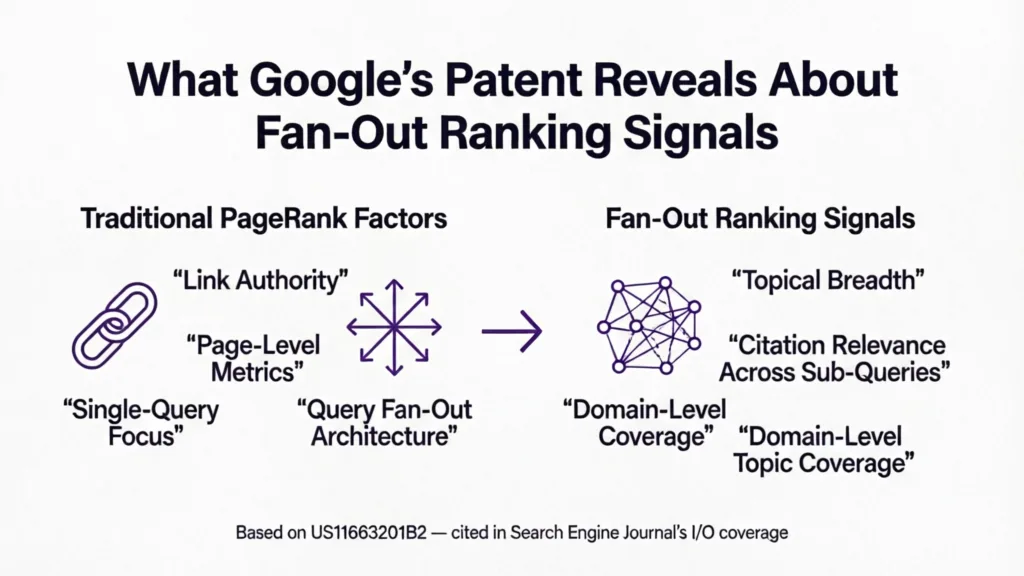

Most SEOs don't know a query fan-out patent exists. Google's patent US11663201B2, referenced in Search Engine Journal's I/O coverage, describes the signals the system uses to evaluate content for citation selection across fan-out sub-queries. These signals differ meaningfully from traditional PageRank factors.

Topical breadth. The patent describes signals measuring how broadly a domain covers a topic area — not just whether individual pages are relevant, but whether the site as a whole demonstrates comprehensive topic authority.

Entity relationships. The AI evaluates how well your content connects entities (people, products, concepts, locations) that naturally co-occur in the topic space. Content that acknowledges the relationships between related entities — not just mentioning them in isolation — scores higher on this signal.

Internal linking depth. Hub-and-spoke content architecture receives explicit weighting. Research found that sites with strong hub-and-spoke internal linking receive 47% more AI citations than sites with equivalent content quality but weak internal linking. The internal link structure signals to the AI which pages belong to a topic cluster, making the whole cluster collectively eligible for citations.

Source diversity. The patent describes signals for how well a page synthesizes information from multiple perspectives — studies, practitioner experience, user examples, and counter-arguments. Single-source content scores lower than multi-perspective content on the same topic.

Freshness. For time-sensitive topics, recency is weighted heavily. AI Mode prioritizes recent sources when sub-queries have a temporal component — "best [product] 2026," "current guidelines for [topic]," or any query where the user's implicit assumption is that the information should be current.

How to Optimize Your Content for Query Fan-Out

The following optimization framework is built directly from the research data above. It covers content planning, structural optimization, and measurement.

Tutorial: How to Map Your Fan-Out Query Cluster in 6 Steps

This is the foundational process. Do it before writing any new content and before deciding which existing content to update.

Start with your head keyword. Write down the primary topic or keyword you want to rank for (e.g., "email marketing for SaaS companies").

Run Google's "People Also Ask" for the head keyword. Expand every PAA result and collect all the questions. These are Google's own signals about what the AI considers related sub-queries for this topic.

Run the same query through ChatGPT and Gemini. Ask each: "What are all the sub-questions someone searching '[your keyword]' might want answered?" Record every angle each AI surfaces. These simulate the sub-queries the fan-out mechanism generates.

Check Reddit and Quora. Search for threads about your topic. Look for recurring questions in comments — these represent the personal context layer of fan-out that formal keyword tools miss entirely.

Check competitor content structure. Look at the 3–5 top-ranking pages for your head keyword and audit their H2/H3 structure. Identify sub-topics they cover that you don't — these are fan-out gaps in your content.

Build a coverage matrix. Create a simple spreadsheet with every sub-question you collected across steps 2–5 as rows, and your existing pages as columns. Mark which pages currently address each sub-question. The empty cells are your content gaps — and filling them is your AI citation optimization plan.

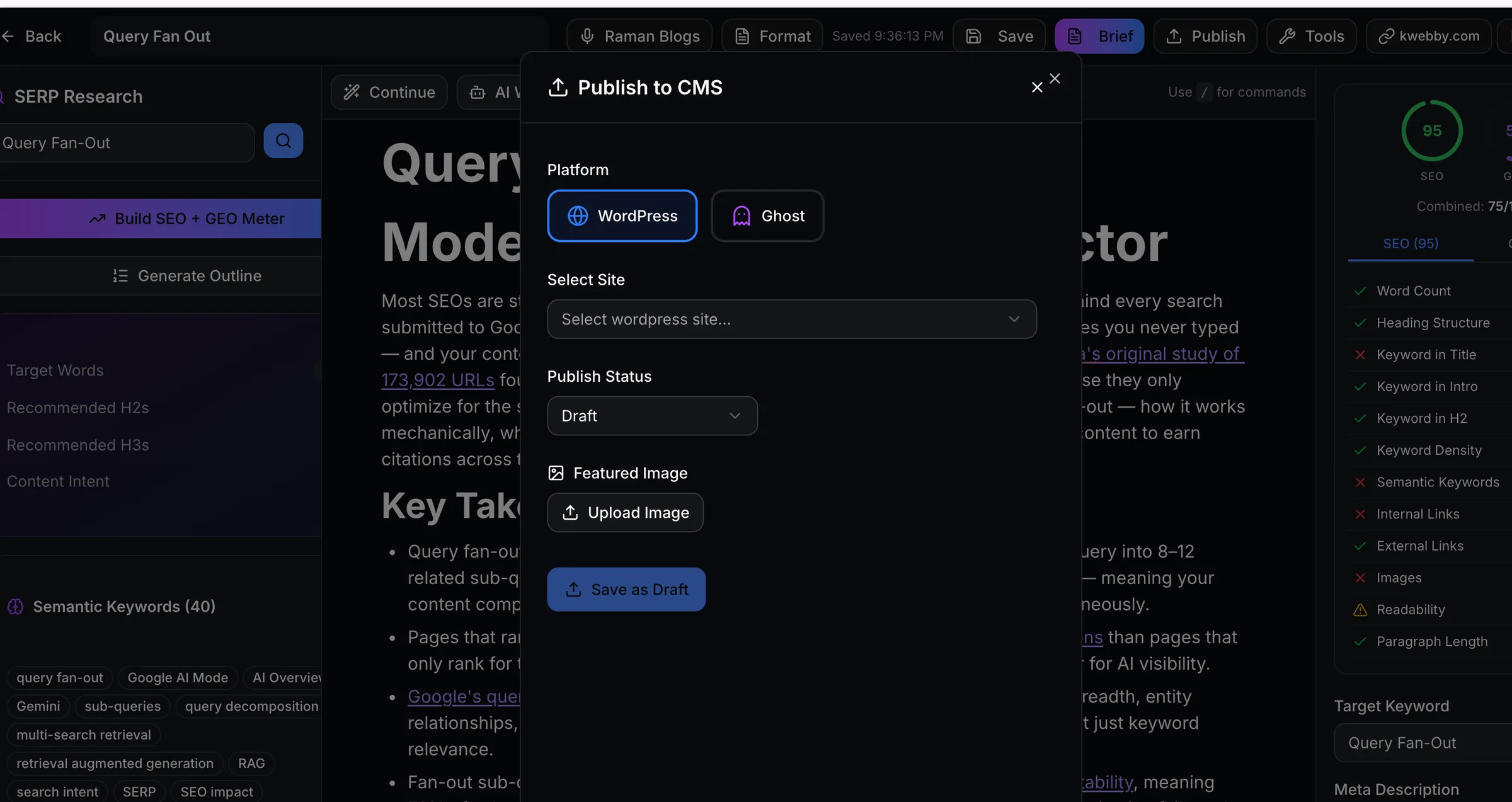

Alternatively Use our GEO + SEO AI Writer

Copyrocket AI Editor helps you write content not only for traditional SEO but GEO like Google AI Mode, ChatGPT, Gemini, Claude, Perplexity and more.

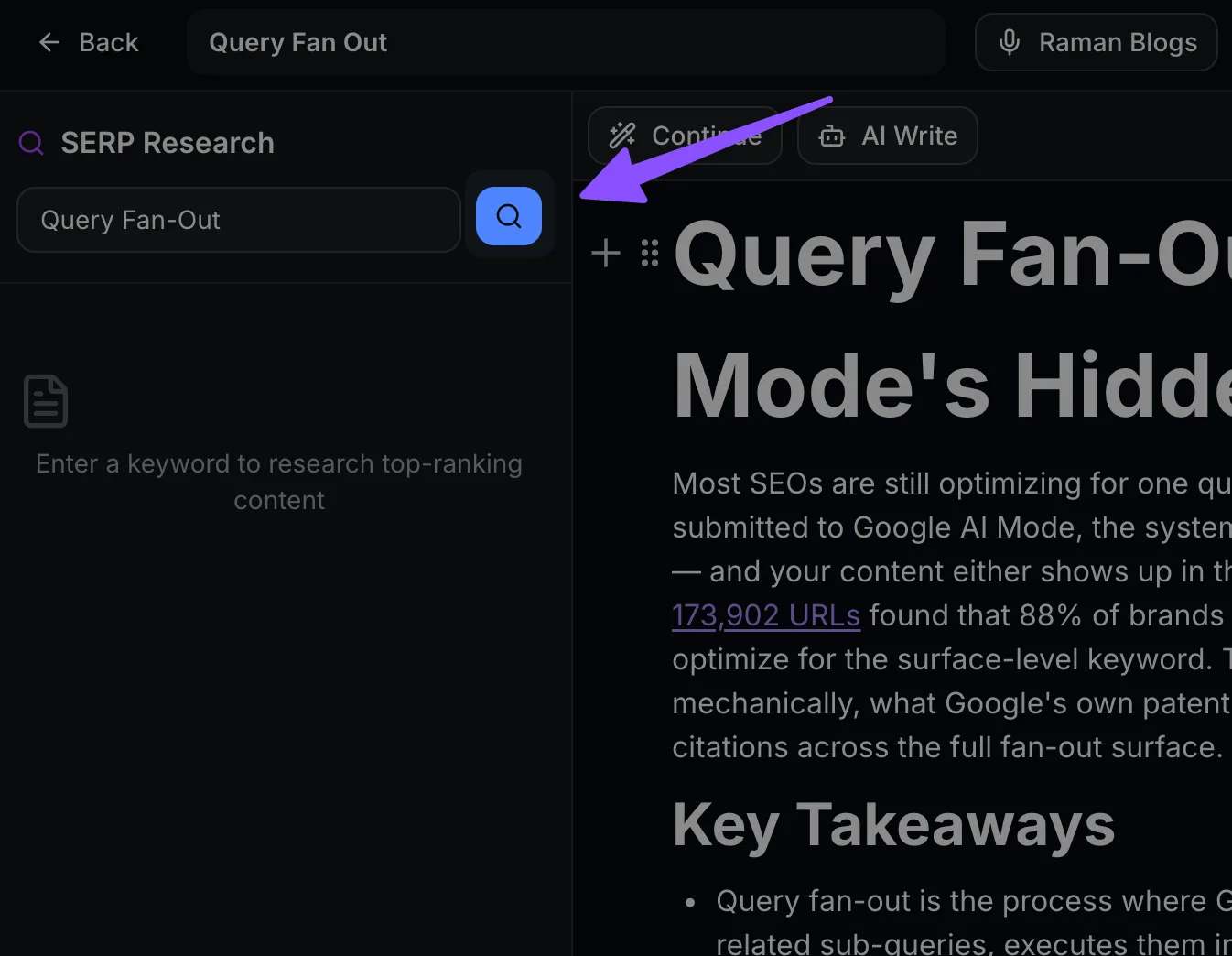

Signup to Copyrocket here and Navigate to AI Tools > AI Editor

Type your Keyword you want to rank for or research using our AI Keyword tool or Keyword research tool or Topical Map Generator

First Research SERPs (Google, Gemini, ChatGPT, Claude, perplexity) for your given keyword like below;

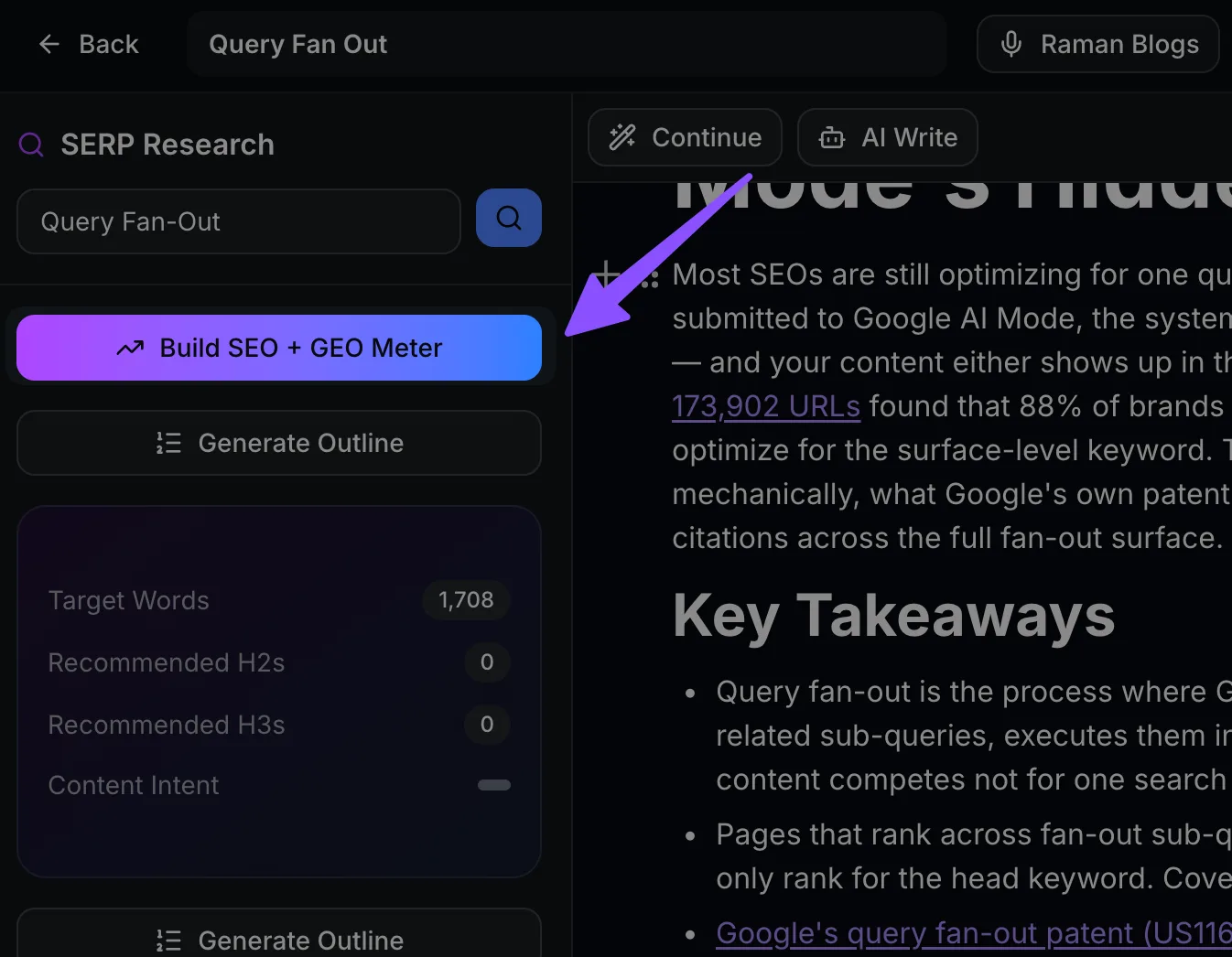

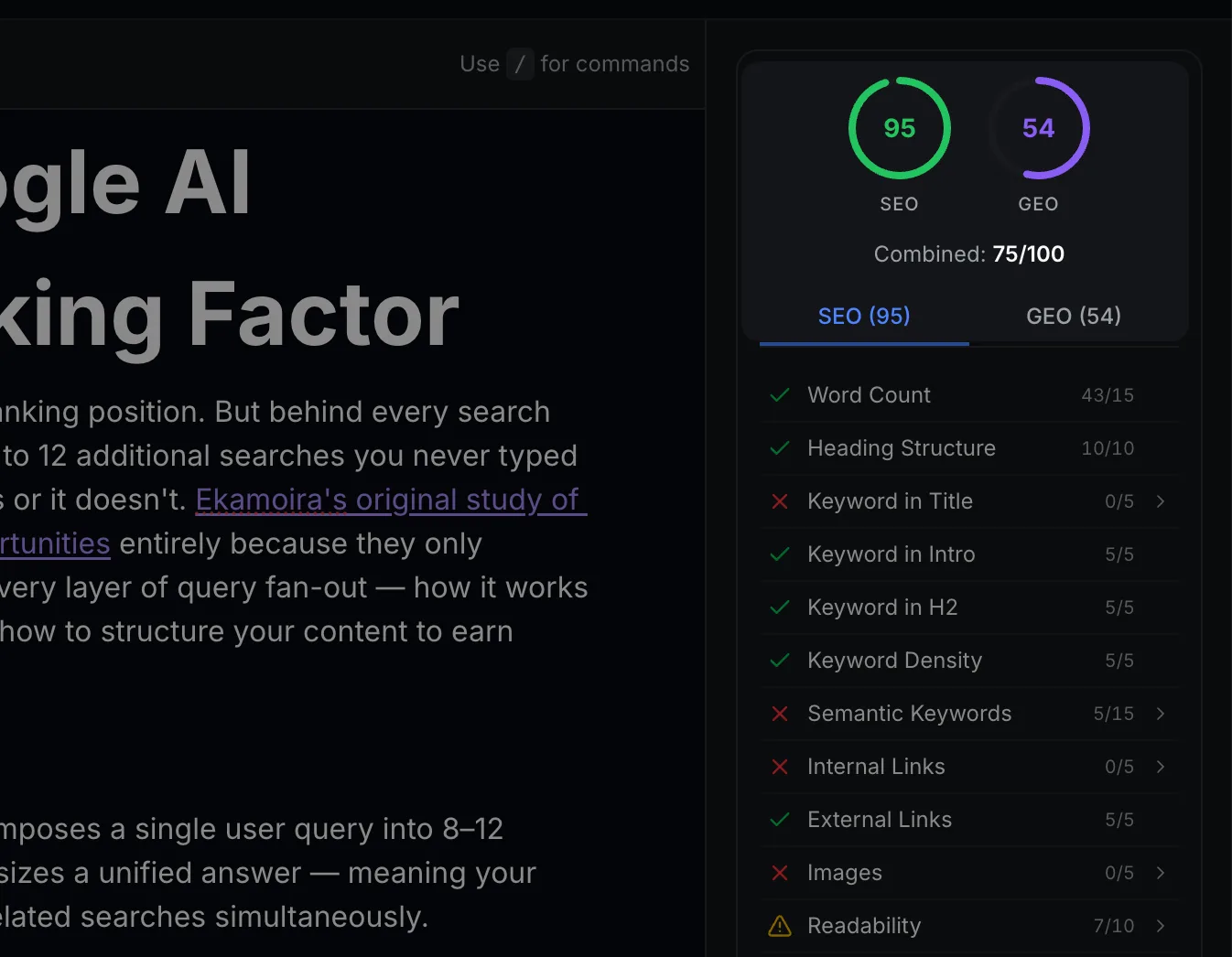

Now Editor has all context, It's time to build SEO GEO Meter, click on the same and you will see list of semantic keywords + GEO, SEO Checklist;

You will see details on right sidebar;

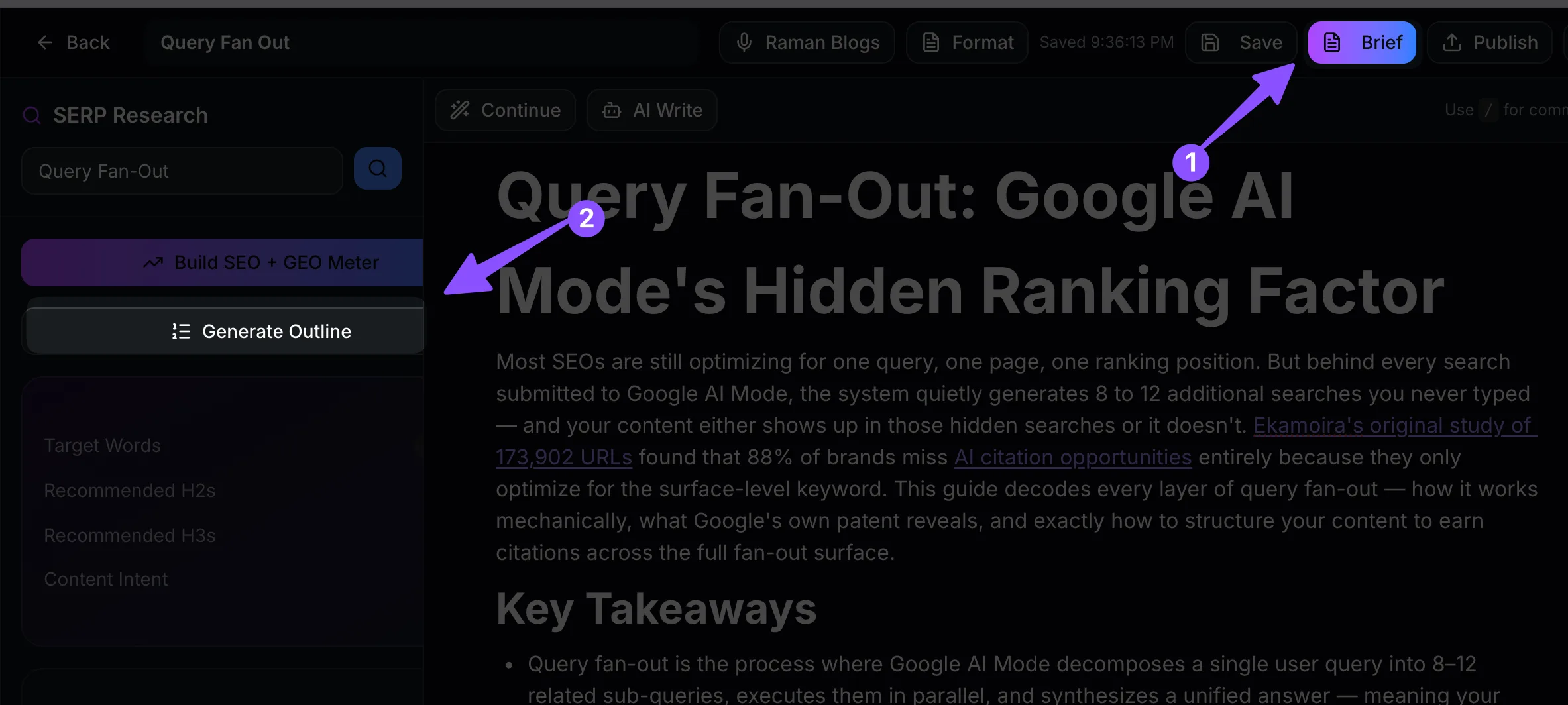

Now it's time to write 2 option i.e. "Brief" to write whole content in one go in your tone and format or "Generate outline" to write from outline;

Now start writing and when you're done save as draft or publish to WordPress or Ghost Website;

There are more feature like auto internal linking, statistics, generate images and more. checkout here.

Tip: Don't worry that most of these sub-questions show zero search volume in Semrush or Ahrefs. That is expected. 95% of fan-out sub-queries are invisible to standard keyword tools. The goal is topic coverage, not keyword volume.

Structure Content for Chunk-Level Extraction

AI Mode doesn't cite full pages — it extracts passages, typically 134–167 words in length, according to Ekamoira's optimal passage length research. Each passage is evaluated independently as a potential answer to a specific sub-query.

This changes how you should write individual sections. Every H2 section of your article is effectively a candidate passage for a different sub-query. The AI evaluates whether that section, standing alone, provides a complete, direct answer to a specific question. Sections that require the reader to have read the previous section to understand them are harder to extract and cite.

Tutorial: How to restructure an existing blog section for chunk-level extraction in 3 steps

Add a direct-answer opening sentence. The first sentence below every H2 heading should state the answer to the implicit question of that section heading. If your heading is "What Is E-E-A-T?" your first sentence should directly define it — not introduce the topic or explain why it matters.

Contain the answer within 150–175 words. Write each section so the complete answer fits in 150–175 words without requiring context from other sections. This matches the optimal extraction length and ensures the section functions as a standalone passage.

End each section with a concrete implication. The final 1–2 sentences should explain what the information means in practice. This signals to the AI that the passage is complete and actionable — not a fragment of a larger explanation.

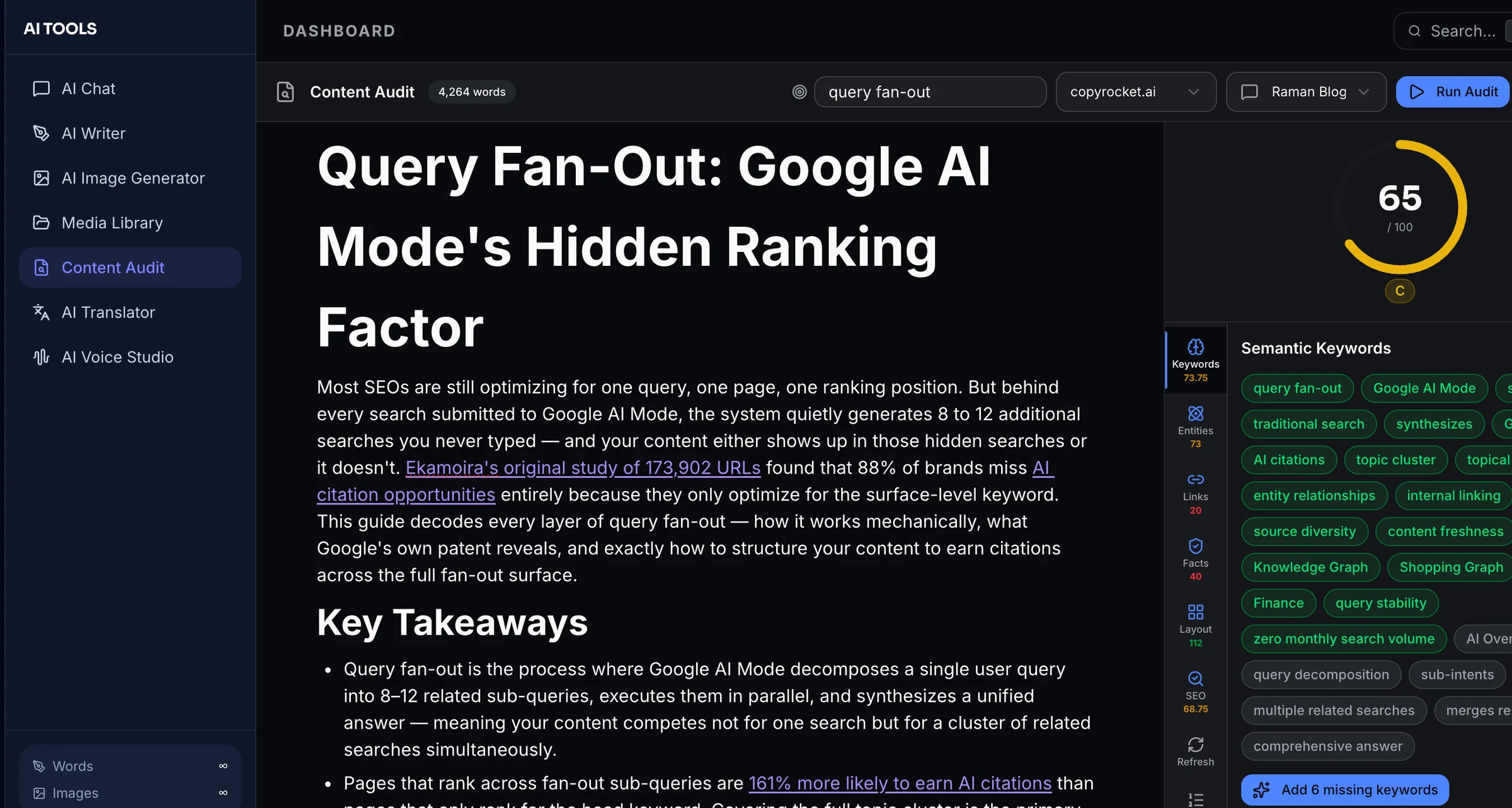

Audit your content using our GEO Content Editor: You can use our AI content auditor to audit your content on 20+ parameters according to GEO + SEO;

Tip: After rewriting a section using this structure, test it by reading only that section in isolation. If you can understand the full answer without reading anything else on the page, the section is extraction-ready.

Build Hub-and-Spoke Architecture for Full Topic Coverage

A single article — no matter how long — cannot cover an entire fan-out cluster. A 5,000-word guide about "content marketing" still cannot address every sub-query the AI generates when users search the topic, because the sub-query space is too large and too variable.

The hub-and-spoke architecture solves this by distributing coverage across multiple interconnected pages:

The hub page covers the primary topic broadly, links to every spoke page, and serves as the entry point for the topic cluster.

Each spoke page covers one sub-topic exhaustively, links back to the hub, and links to related spokes.

Together, they cover the fan-out surface comprehensively. The hub earns citations for broad sub-queries. Each spoke earns citations for its specific sub-topic sub-queries. The internal links between them signal to Google that the whole cluster is the authoritative source on this topic.

Real-life scenario: An HR software company wants AI visibility for "employee onboarding." Their hub page covers the topic broadly. Their spokes cover: onboarding software vs. HRIS (for the comparison sub-query), onboarding for remote teams (for the context sub-query), 30-60-90 day onboarding plans (for the how-to sub-query), onboarding cost benchmarks (for the pricing sub-query), and onboarding metrics and KPIs (for the measurement sub-query). When a user asks "how do I set up employee onboarding for a remote team," the AI fans out into all of those sub-queries — and this company's content covers every branch.

Tip: Start building your hub-and-spoke cluster by first publishing the hub page, then creating spoke pages one by one over 4–8 weeks. As each spoke goes live, update the hub to link to it. This incremental approach builds topical authority progressively rather than requiring a full content overhaul upfront.

Optimize for Comparison and "vs." Sub-Queries

Similarweb's analysis found that fan-out heavily weights comparison sub-queries. When someone searches for nearly any product, service, or strategy, the AI routinely generates sub-queries comparing it to alternatives: "X vs. Y," "X for beginners vs. advanced," "X with budget constraint," and "X alternatives."

If your content doesn't have dedicated comparison sections or pages, you are invisible to this entire class of sub-queries — which often represents a significant share of the fan-out surface for commercial topics.

Tip: For every product or service you cover, create a dedicated comparison page or a prominent comparison section in your main guide. Use headings like "[Your Product] vs. [Competitor]" or "When to Choose [Option A] Over [Option B]." These directly match the comparative intent sub-queries AI Mode generates.

The Fan-Out Instability Problem and How to Solve It

The 73% query instability finding deserves its own treatment because it undermines the most intuitive approach to fan-out optimization: trying to target specific sub-keywords as if they were traditional keywords.

Ekamoira's research shows that the sub-queries the AI generates for the same head keyword change between searches — sometimes dramatically. Two users searching "best CRM for small businesses" at the same moment can trigger different sub-query sets based on their inferred context. The AI adds, removes, or substitutes sub-queries based on signals that content creators have no visibility into.

This means building a content strategy around a fixed list of "target fan-out sub-keywords" will fail for the same reason that keyword-stuffing fails: the optimization target keeps moving.

The correct response to instability is breadth. Research shows that content covering 80% or more of the topical surface for a keyword retains 85.4% AI visibility despite the 73% instability rate. The logic is statistical: if your content covers 80% of the possible sub-query space, even a randomly generated set of 12 sub-queries will hit your content most of the time. If your content covers only 20% of the sub-query space, even a favorably generated set of sub-queries will miss you most of the time.

Tip: Aim for 80% topical coverage rather than 100% precision targeting. Use the coverage matrix you built in the fan-out mapping tutorial above to identify which sub-topics you're missing, then close the largest gaps first. You don't need to cover everything — you need to cover enough that instability can't consistently exclude you.

How Fan-Out Differs Across Google, ChatGPT, and Perplexity

Query fan-out is not unique to Google AI Mode — it operates across all major AI search platforms, but with important differences in scale, mechanism, and citation behavior.

Ekamoira's cross-platform analysis found a Cross-Platform Fan-Out Index weighting of Google (0.45), ChatGPT (0.30), and Perplexity (0.25) for current AI search visibility — meaning Google's fan-out still dominates total AI search exposure, but the other platforms represent a combined 55% that SEOs focusing only on Google are missing.

Google AI Mode: 8–12 sub-queries per standard search, passage-level focus, draws from Search index plus Shopping Graph, Knowledge Graph, Finance, and Maps databases. Deep Search can issue hundreds of sub-queries with a multi-minute completion window.

ChatGPT with web search: 4–20 sub-queries depending on query complexity, applies "modifier injection" (adding qualifiers like "how to," "best," "vs," "for beginners" to generate variants), hits Bing's index via API. The modifier injection pattern means content with clear modifier-specific sections (beginner guides, comparison sections, step-by-step tutorials) earns disproportionate citations.

Perplexity: 3–8 sources per response, 358ms median latency (fastest of the three), highly recency-weighted. Perplexity's citation pattern favors fresh, specific, and sourced content — making it the platform where regularly updated content with external citations gets the highest citation rate relative to its size.

Final Thoughts

Query fan-out is not a technical curiosity — it is the engine behind every AI Mode response, and it explains precisely why so many brands with strong traditional SEO are invisible in AI search. The 88% visibility gap, the 73% sub-query instability, and the 95% zero-search-volume figure all point to the same conclusion: the entire framework of keyword-first content strategy was built for a search environment that no longer handles most informational queries.

The shift to fan-out optimization requires a different starting point. Instead of asking "what keyword do I want to rank for?" ask "what is every question someone exploring this topic would eventually want answered?" Build a content cluster that covers 80% of that question space, structure each section for standalone chunk extraction, link everything together in a hub-and-spoke architecture, and track your AI citation frequency — not just your rankings.

Start this week: run the six-step fan-out mapping tutorial on your most important topic, build the coverage matrix, and identify your three largest sub-topic gaps. Those gaps are where your AI visibility is leaking. Close them, and the citations follow.

Frequently Asked Questions

Written by

Raman Singh

Raman Singh is a highly skilled marketing professional who serves as the head of marketing at Copyrocket AI. With years of experience in the field, Raman has developed a deep understanding of all asp

View all postsYour AI Marketing Agents

Are Ready to Work

Stop spending hours on copywriting. Let AI craft high-converting ads, emails, blog posts & social media content in seconds.

Start Creating for FreeNo credit card required. 50+ AI tools included.

Related Articles

General

GeneralNotebookLM For Coders: Turn Docs Into Faster Code

Code work often fails for a simple reason. You do not have the right context at the right time. You read docs in one tab, skim tickets in another tab, and then...

General

GeneralHow to Optimize for AI Search in 2026: The Complete Guide

AI search has shifted from experimental feature to primary search method for millions of users. ChatGPT Search, Google AI Overviews, Perplexity, Claude, and Gem...

General

GeneralClaude Opus 4.6 Review: Here's What New!

Claude Opus 4.6 from Anthropic draws attention because teams want an AI model that writes better code, follows instructions, and stays consistent across long se...